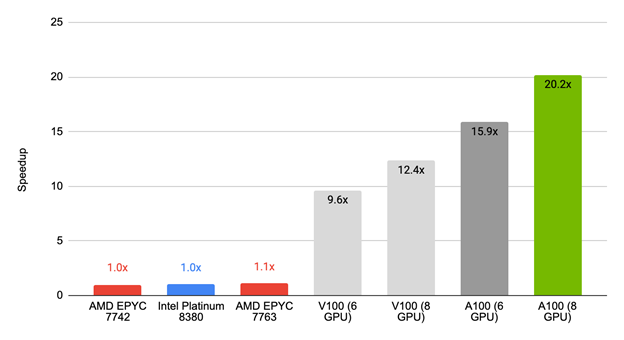

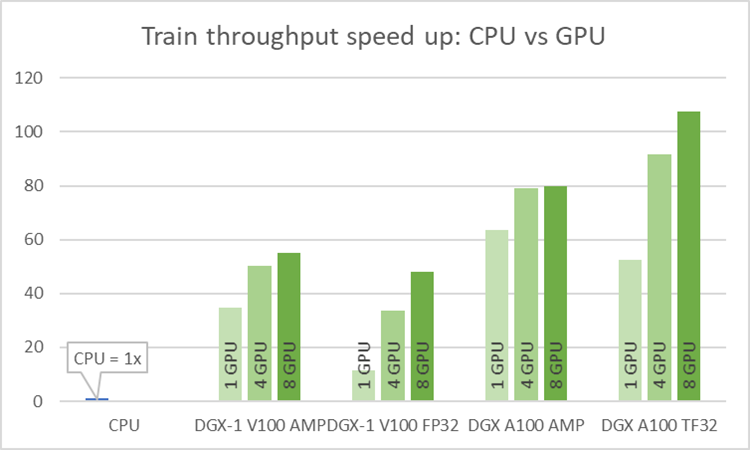

Accelerating the Wide & Deep Model Workflow from 25 Hours to 10 Minutes Using NVIDIA GPUs | NVIDIA Technical Blog

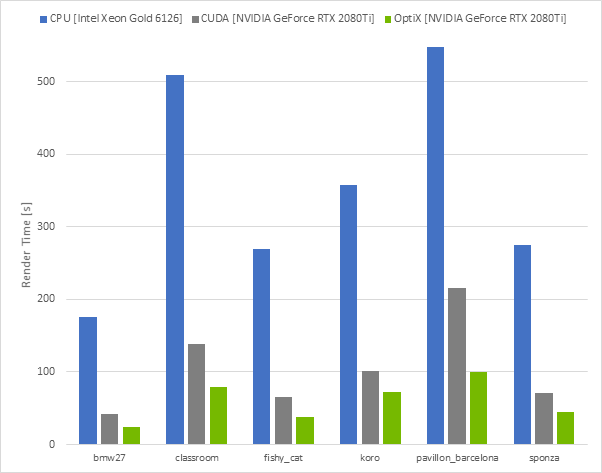

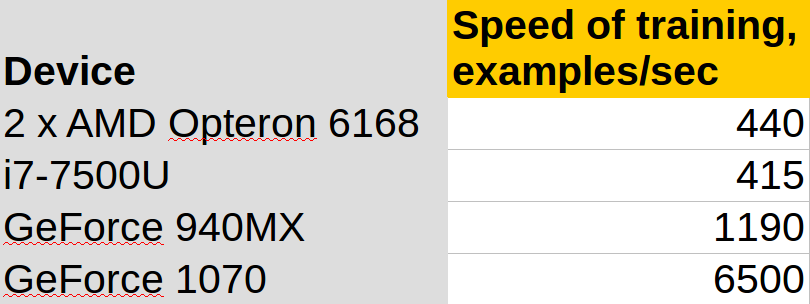

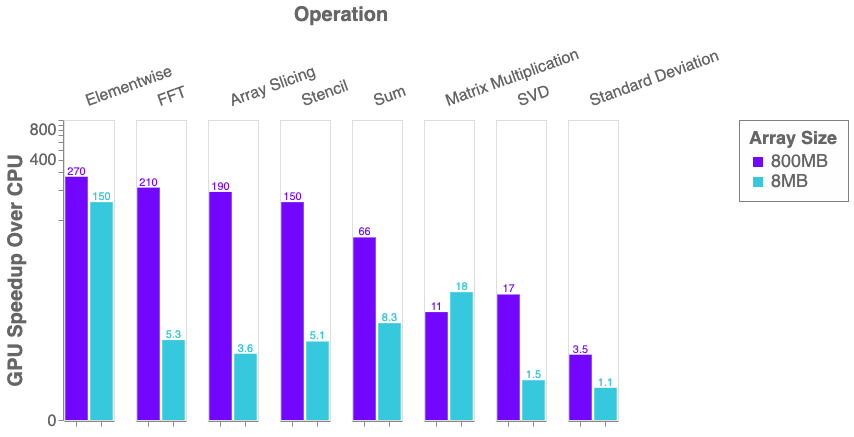

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

Nvidia plans to employ GPUs and AI to speed up and improve semiconductor design in the future - Bhive Design Blog