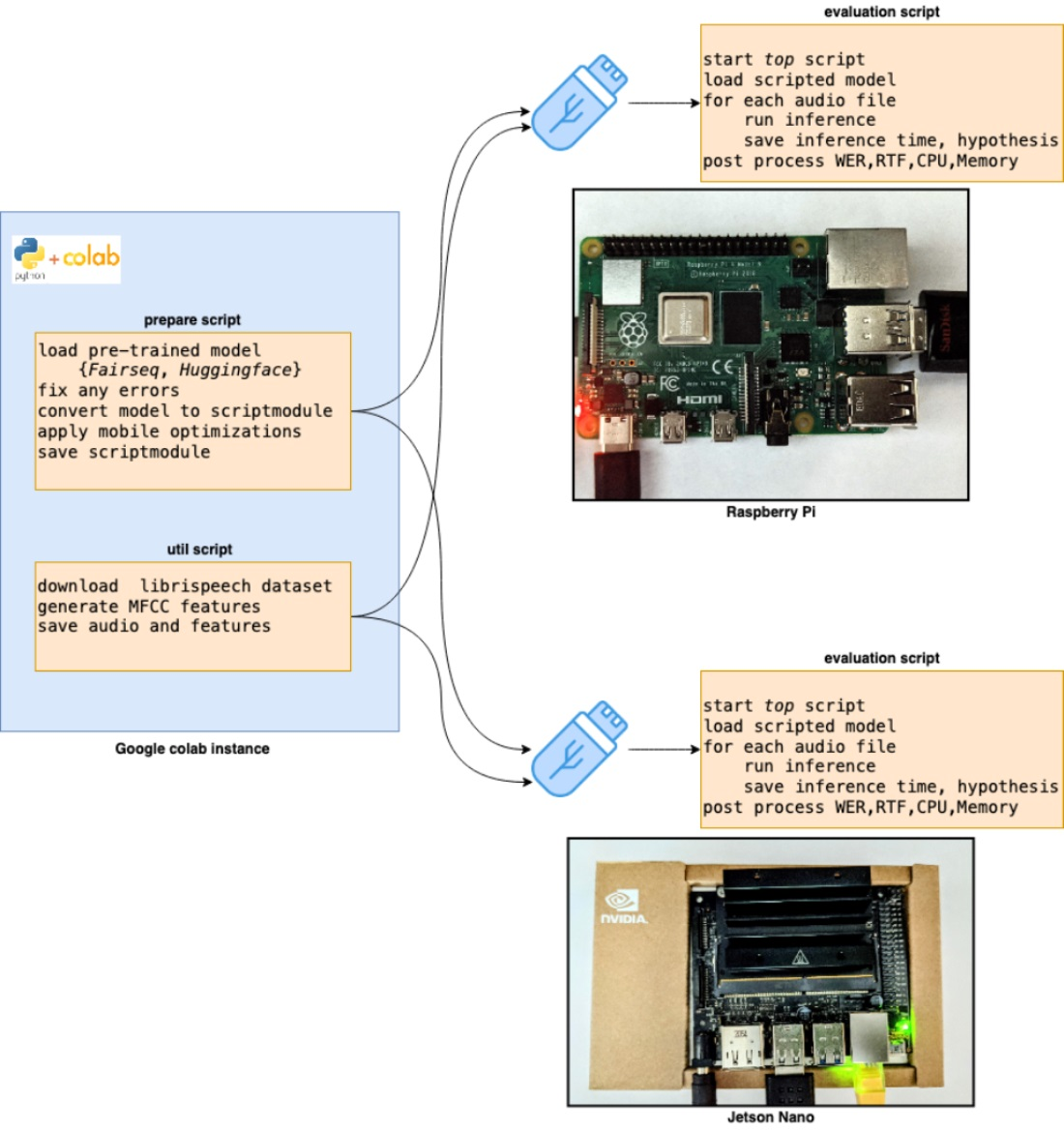

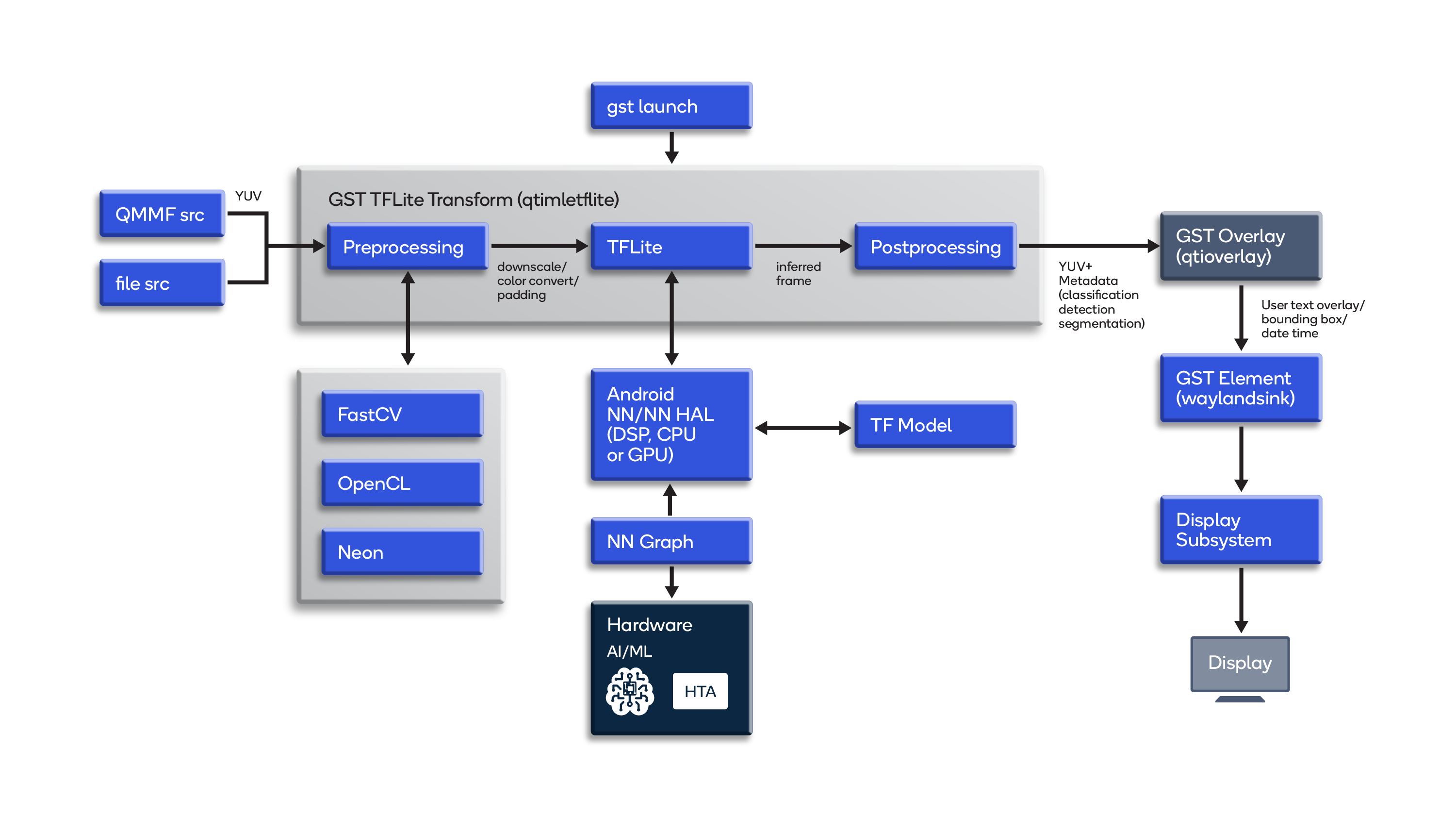

Parallelizing across multiple CPU/GPUs to speed up deep learning inference at the edge | AWS Machine Learning Blog

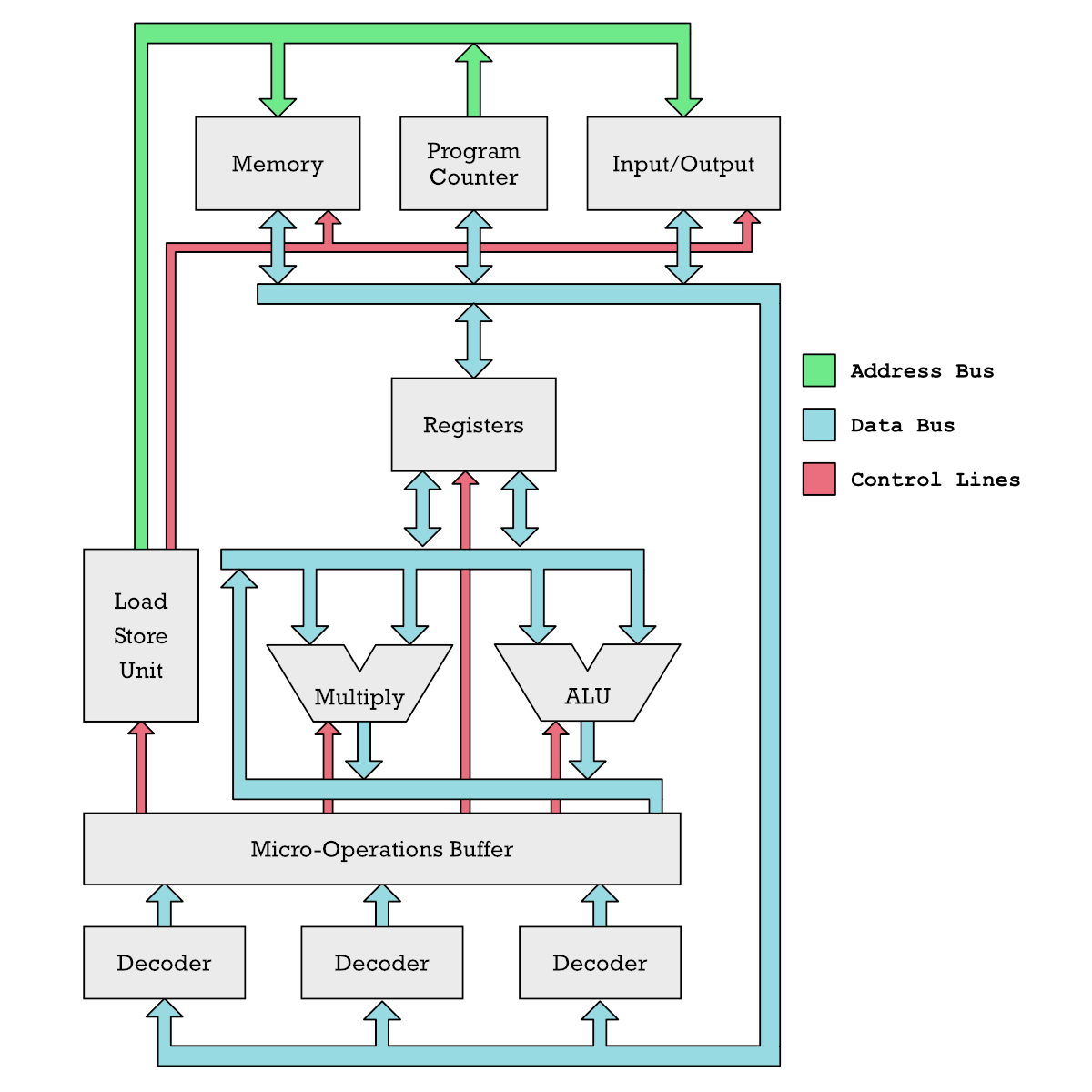

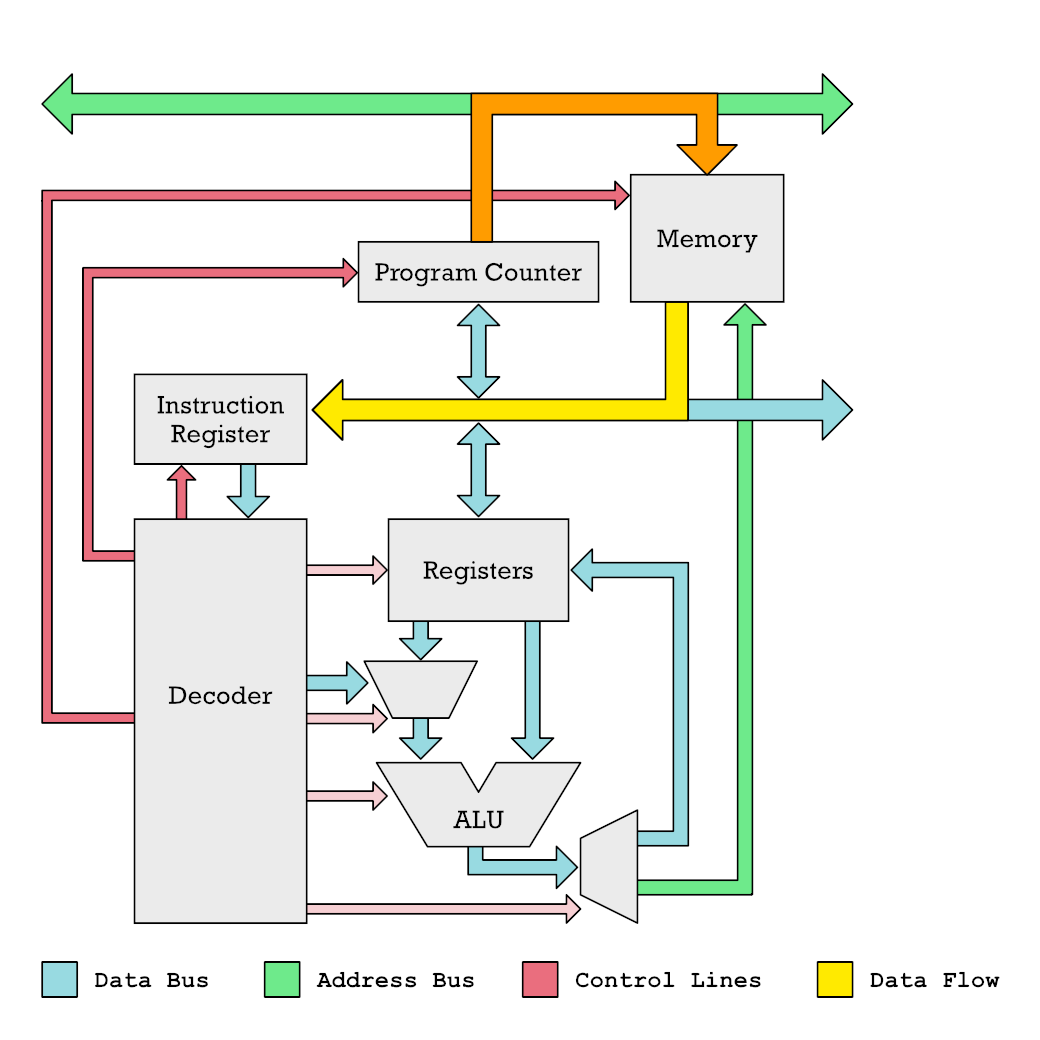

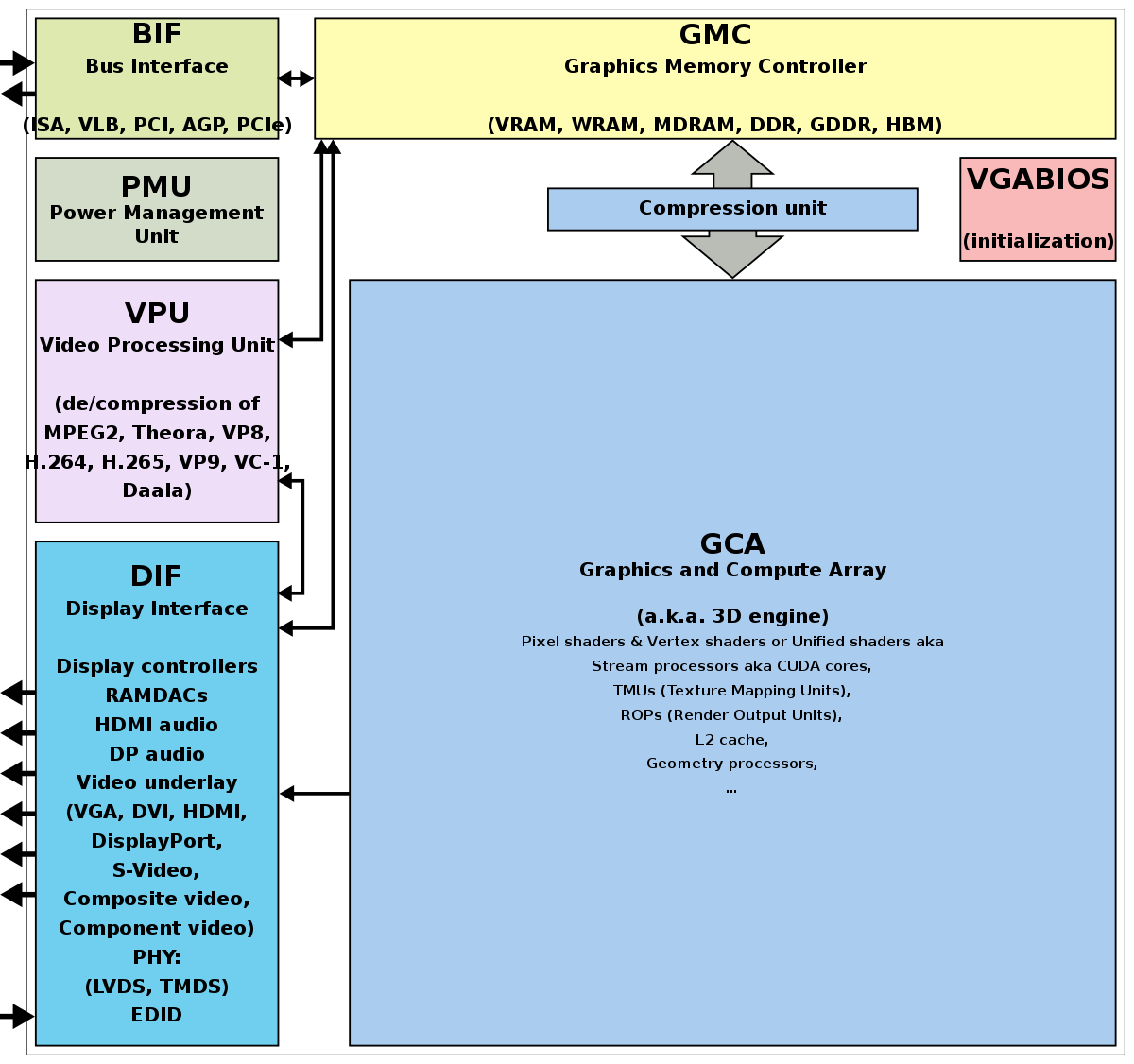

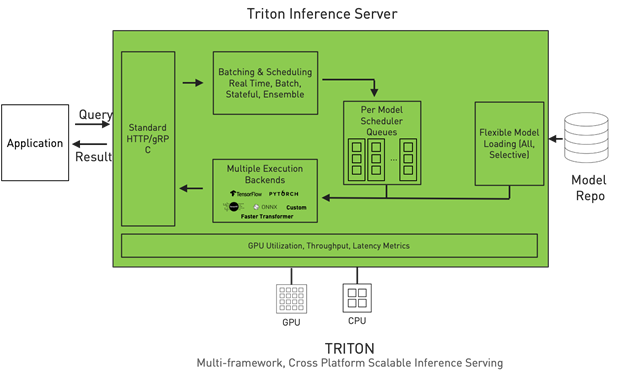

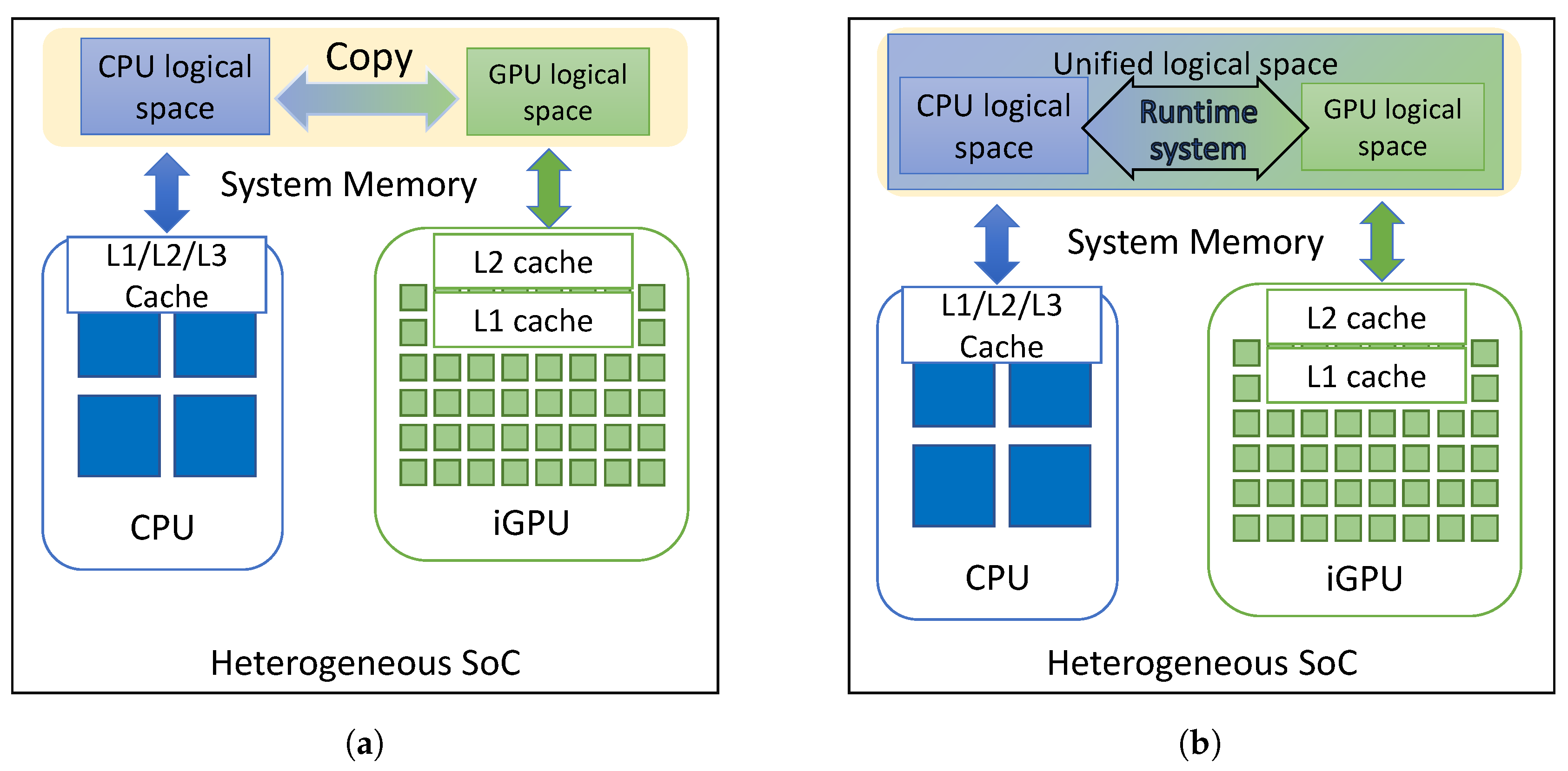

The description on load sharing among the CPU and GPU(s) components... | Download Scientific Diagram

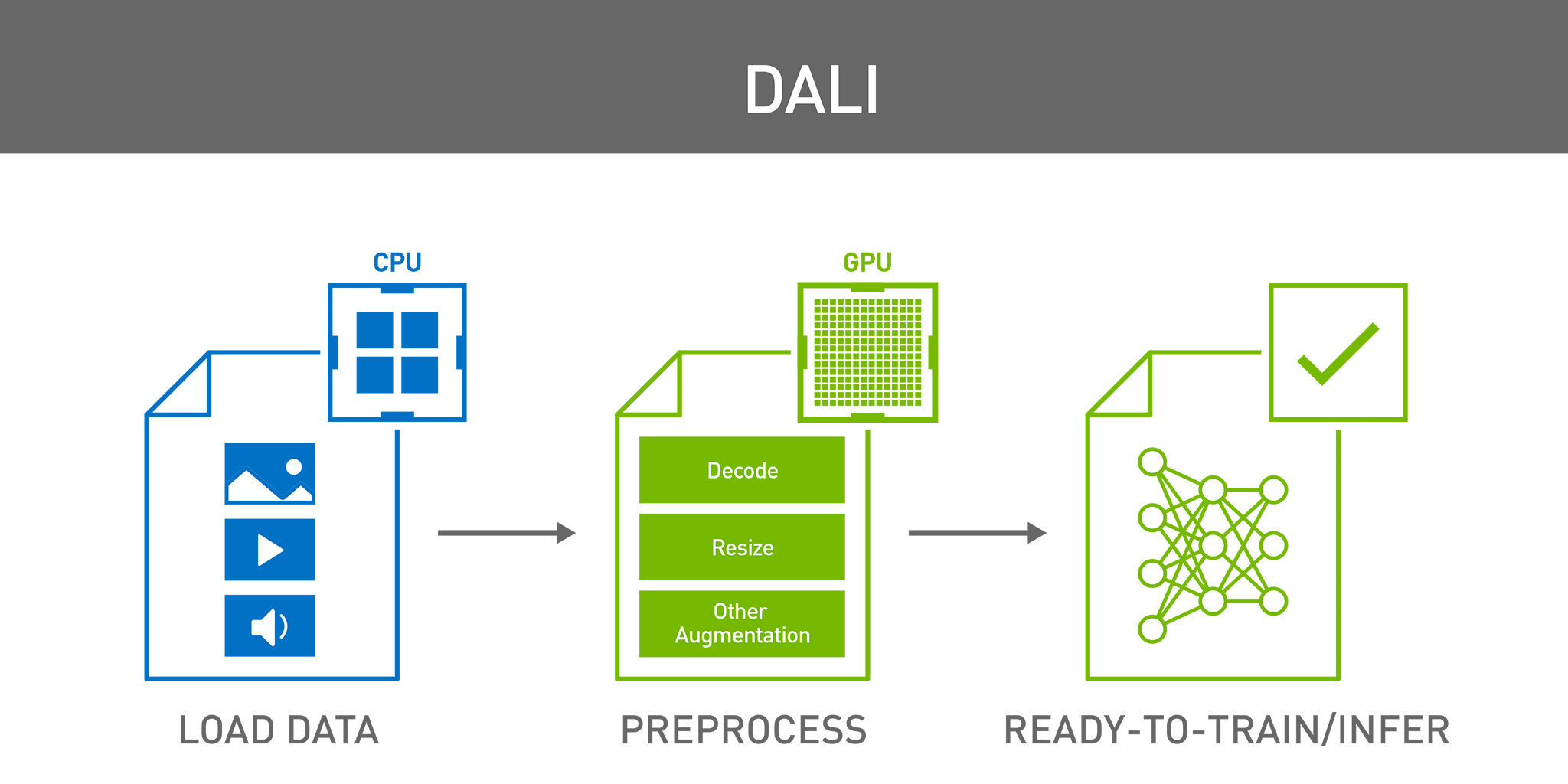

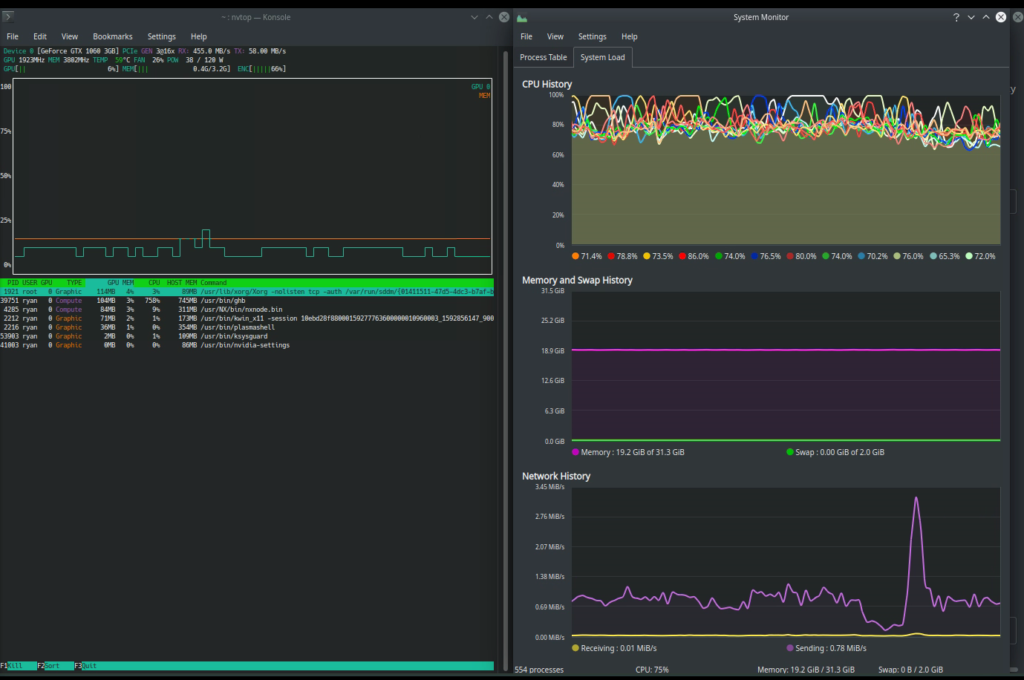

How distributed training works in Pytorch: distributed data-parallel and mixed-precision training | AI Summer